Serverless Data Engineering: How to Generate Parquet Files with AWS Lambda and Upload to S3 - YouTube

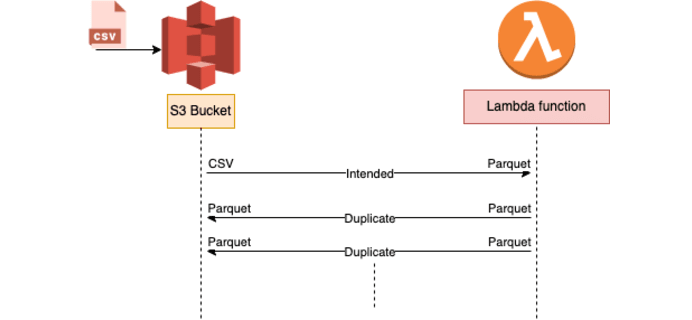

How we accidentally burned $40,000 by calling recursive patterns | by Dheeraj Inampudi | Level Up Coding

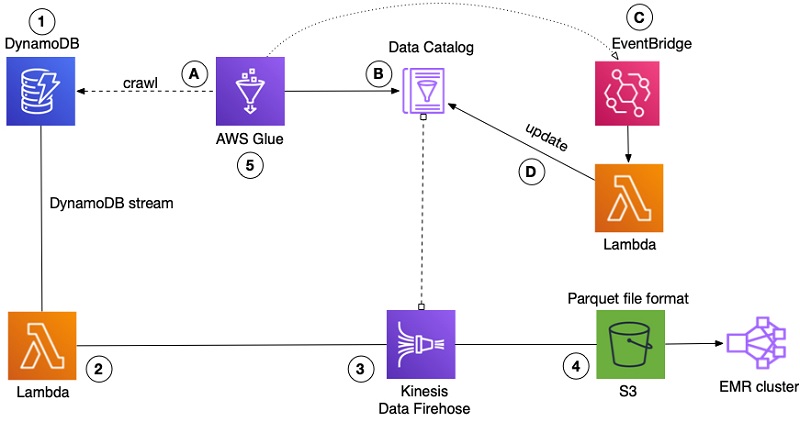

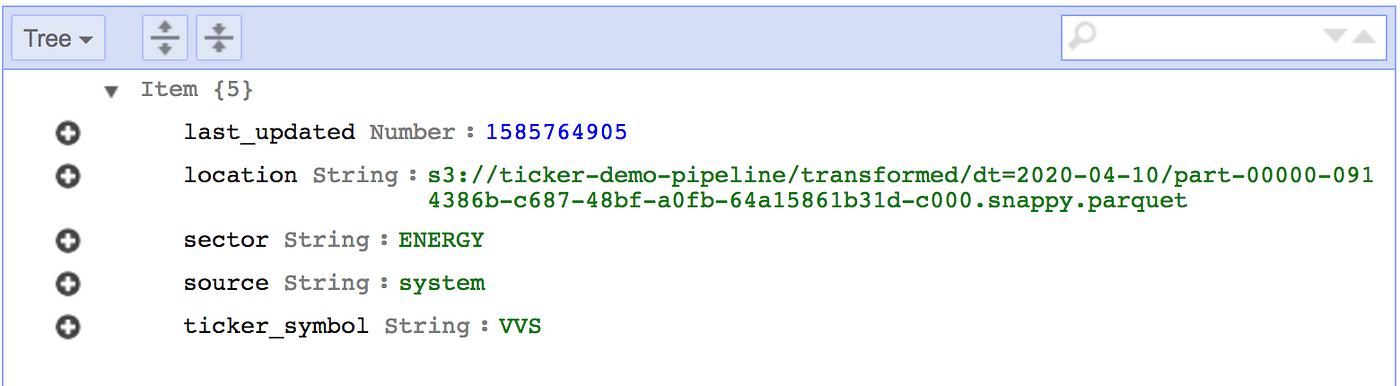

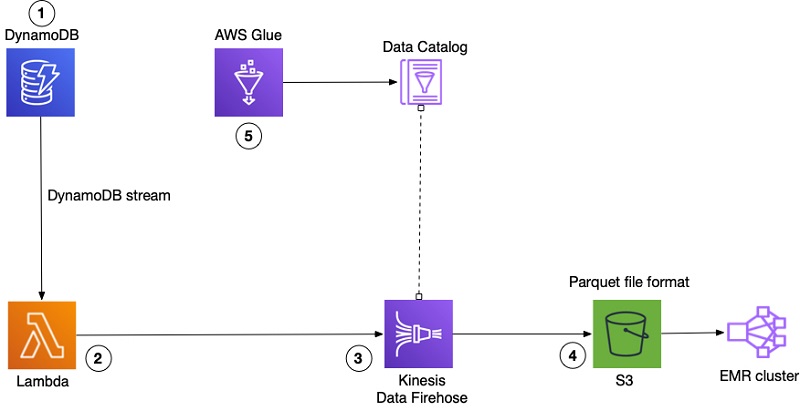

How FactSet automated exporting data from Amazon DynamoDB to Amazon S3 Parquet to build a data analytics platform | AWS Big Data Blog

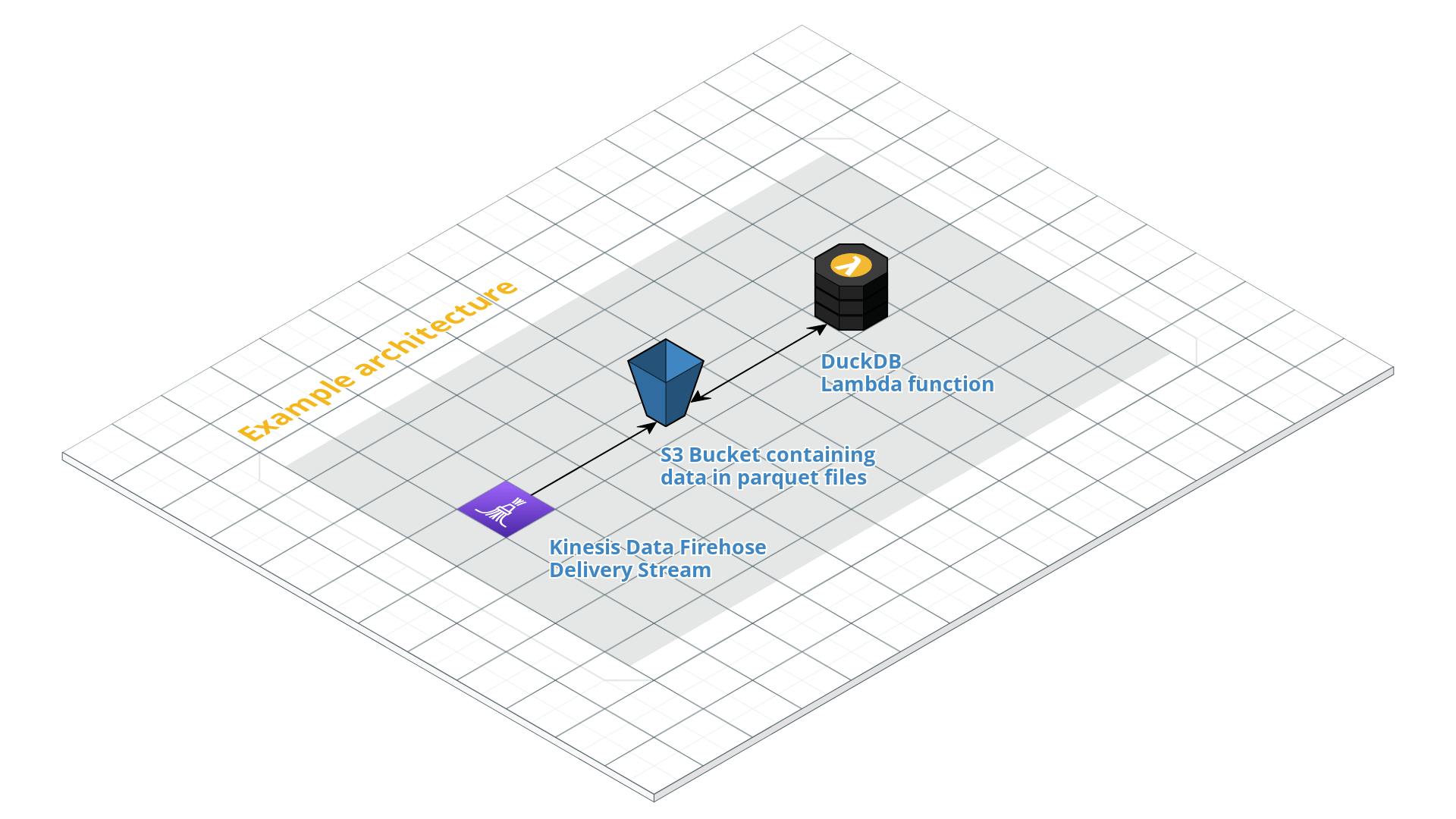

Serverless Conversions From GZip to Parquet Format with Python AWS Lambda and S3 Uploads | The Coding Interface

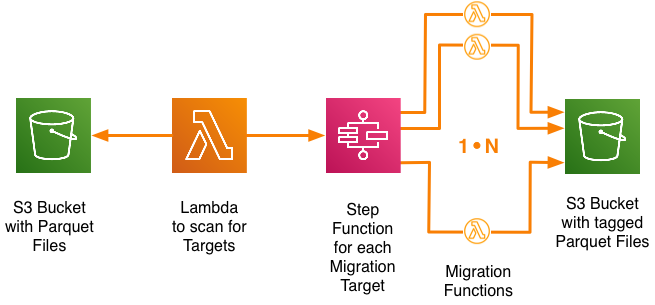

Controlled schema migration of large scale S3 Parquet data sets with Step Functions in a massively parallel manner | by Klaus Seiler | merapar | Medium

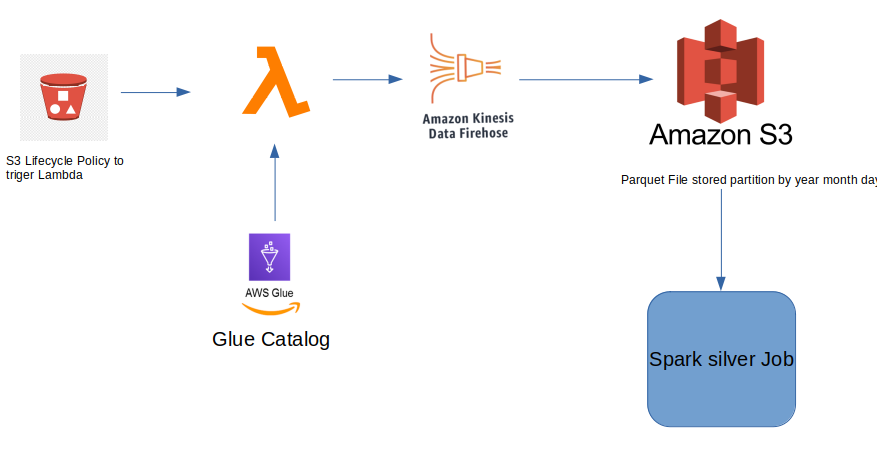

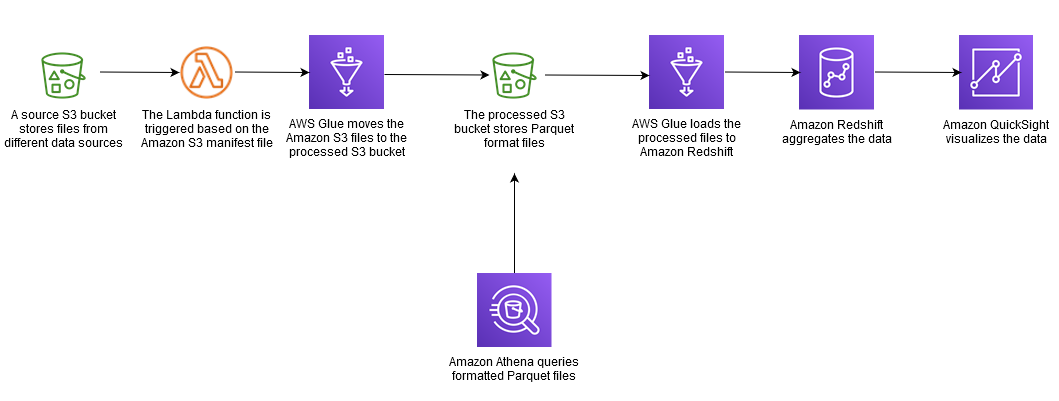

Crea una pipeline di servizi ETL per caricare i dati in modo incrementale da Amazon S3 ad Amazon Redshift utilizzando AWS Glue - Linee guida prescrittive di AWS

Integrate your Amazon DynamoDB table with machine learning for sentiment analysis | AWS Database Blog